Managarm: August 2022 Update

Post by Arsen Arsenović (@ArsenArsen), Dennis Bonke (@Dennisbonke), Geert Custers (@geertiebear), Alexander van der Grinten (@avdgrinten), Matt Taylor (@64) and Kacper Słomiński (@qookei).

Introduction

In this post, we will give an update on the progress that the Managarm operating system made in the last two and a half years, it has been quite a ride! For readers who are unfamilar with Managarm: it is a microkernel-based OS that supports asynchronicity throughout the entire system while also providing compatibility with lots of Linux software. Feel free to try out Managarm (e.g., in a virtual machine). Our README contains a download link for a nightly image and instruction for trying out the system.

Since our 2019 status update, Managarm has been visible at various places over the internet. Most prominently, Managarm was present at CppCon 2020 which was held virtually. Our talk focuses on the use of modern C++20 within Managarm (including asynchronicity via coroutines); a recording can be found on YouTube:

Additionally, the YouTube channel Systems with JT featured our project. Check out the video for a more hands-on walk through the system:

We’ve also been hosted at FOSDEM 2022, where Alexander went over the basics of IPC and the general architecture of the system.

Major Updates

Major updates since our last post include a 64-bit ARM port, the start of the RISC-V port, support for the Rust programming language in user space, and support for the xbps package manager.

Another important addition is our handbook that describes parts of the system in detail (targetting both users and developers). The handbook is still quite incomplete but regular updates can be expected in the future.

AArch64 Port

Since July 2020, we have been working on the 64-bit ARM (= AArch64) port of Managarm. In its current form, the port is able to boot into Weston and kmscon and run some command-line programs in QEMU. However, a large part of our software repertoire is still untested, and the port in general is still work in progress. We have an upcoming blog post that will be going into more detail about the porting process, implementation details, and the obstacles we faced along the way.

Rust Support in User Space

Project member @64 has been working to bring Rust to Managarm. So far, this has consisted of:

- Adding information about our

x86_64-unknown-managarm-systemtarget to rustc (so that it can link with our LLVM and such). This initial work was done by @avdgrinten. - Adding bindings for mlibc in Rust’s libc crate (managarm/bootstrap-managarm#96).

- Patching Rust’s standard library to support Managarm, and stubbing out functionality that we do not implement yet (managarm/bootstrap-managarm#96).

- Integrating cargo with our build system so that we can cross-compile crates for Managarm (managarm/xbstrap#48).

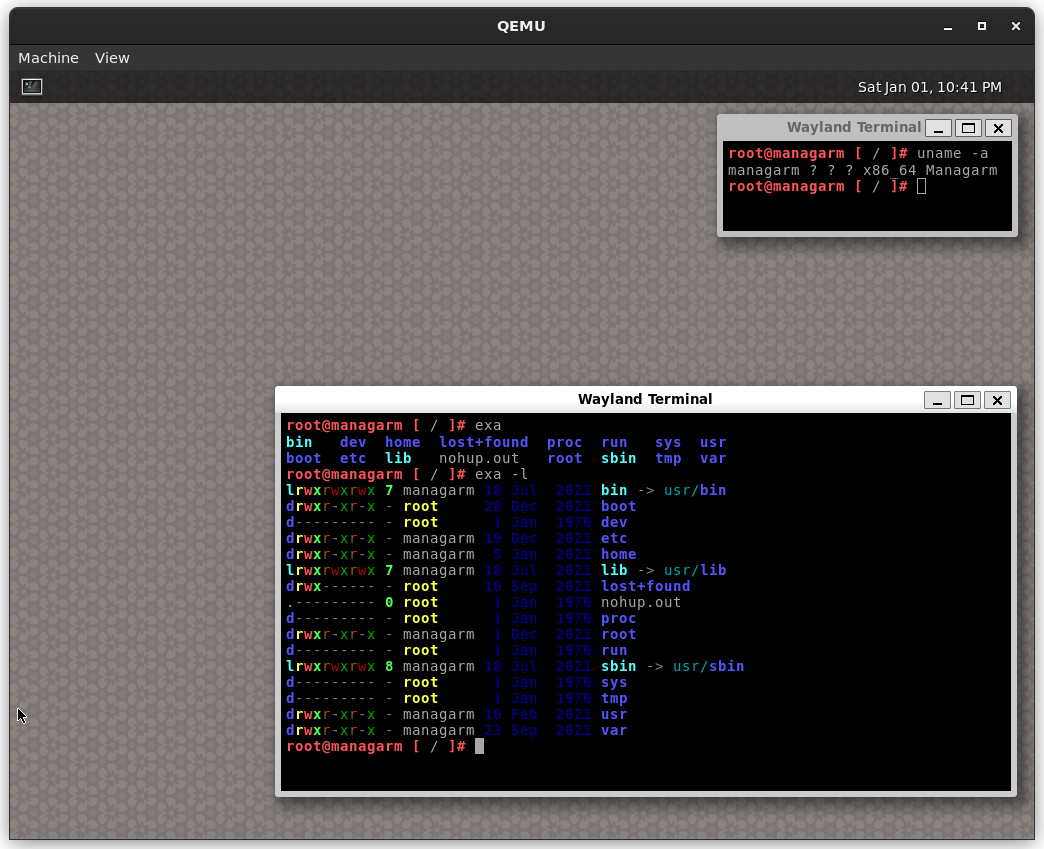

- Porting ripgrep, exa and alacritty (managarm/bootstrap-managarm#102, managarm/bootstrap-managarm#180).

There is still work to be done before all of Rust’s standard library is fully supported within Managarm. Our next priority is upstreaming our patches back into the Rust ecosystem, since maintaining these downstream has turned out to be quite a struggle.

In the long term, we would like to support Rust drivers for Managarm. This will involve adding support for Rust in our IPC protocol codegen tool bragi, and writing a Rust wrapper for our asynchronous syscall API.

Build Servers and xbps Packages

Two years ago, we deployed xbbs, a distributed build server specifically crafted for xbstrap and xbps (i.e., the package manager popularized by the Void Linux distribution). It allows us to effectively distribute builds of individual package of our Managarm distribution across a handful of servers, while only rebuilding parts of the distribution that have changed since the last build. Additionally, we got closer to our goal of porting and utilizing xbps itself to manage system packages in our distribution images. You can use it today to get packages built by us on https://builds.managarm.org/ but you cannot use it in the system itself with xbps just yet, although work is ongoing to fix that.

The goals of this “subproject” include:

- Building packages as updates are pushed and checking their validity (finding SONAME changes and similar breaking issues)

- Automated detection of errors caused by inconsistent/unclean build environments

- Centralizing the tracking of reproducibility of packages

- Increasing the speed of new packages and updates reaching users and reducing the chance of introducing new errors, by spotting them early and notifying maintainers

New features in the kernel, POSIX and other servers

Hardware virtualization (VMX) support. Shortly after our 2019 status update, Managarm received support for hardware virtualization on Intel CPUs (using Intel’s VMX). We plan to extend virtualization support to AMD CPUs as well (which implement AMD SVM instead of VMX). In the long term, this will allow us to support the KVM interface, such that we can run hypervisors like QEMU-KVM natively on Managarm.

pthreads. As mentioned in our “Porting Software to Managarm” post, in 2020 we implemented pthread thread creation and other related functions. mlibc has also gotten a pthread cancellation implementation, and we have an upcoming blog post going into detail about it.

A new IDL Compiler: bragi. In February 2020, we started work on our own interface description language called bragi. The aim is to replace all of our current protobuf usage with bragi. Although it is not yet fully feature-complete (we still want/need to add features like variants or inheritance), bragi is already mature enough to enable us to refactor some of our IPC protocols (namely, the POSIX, hardware, and filesystem protocols) to use bragi. We also started implementing new protocols (like the ostrace one) based on bragi.

New Drivers: AHCI and NVMe and Storage Improvements. Managarm’s block driver stack received significant updates in 2021. We now have drivers for the two most important modern block device controllers AHCI and NVMe. The AHCI driver was written by Matt Taylor (@64), while the NVMe driver was contributed by Jin Xue (@Jimx-). Furthermore, due to a PR by Geert Custers (@geertiebear), we can now identify partitions by their UUIDs. This feature will make identifying the boot device more robust in the future and is especially important when running Managarm on physical hardware (and not in a virtual machine).

Networking Improvements. Our networking stack (“netserver”) can now connect to TCP servers over IPv4. It supports basic TCP features; however, the server side of the TCP 3-way handshake and path MTU discovery is not implemented yet. Once these gaps are filled, we will have a mostly complete IPv4 stack.

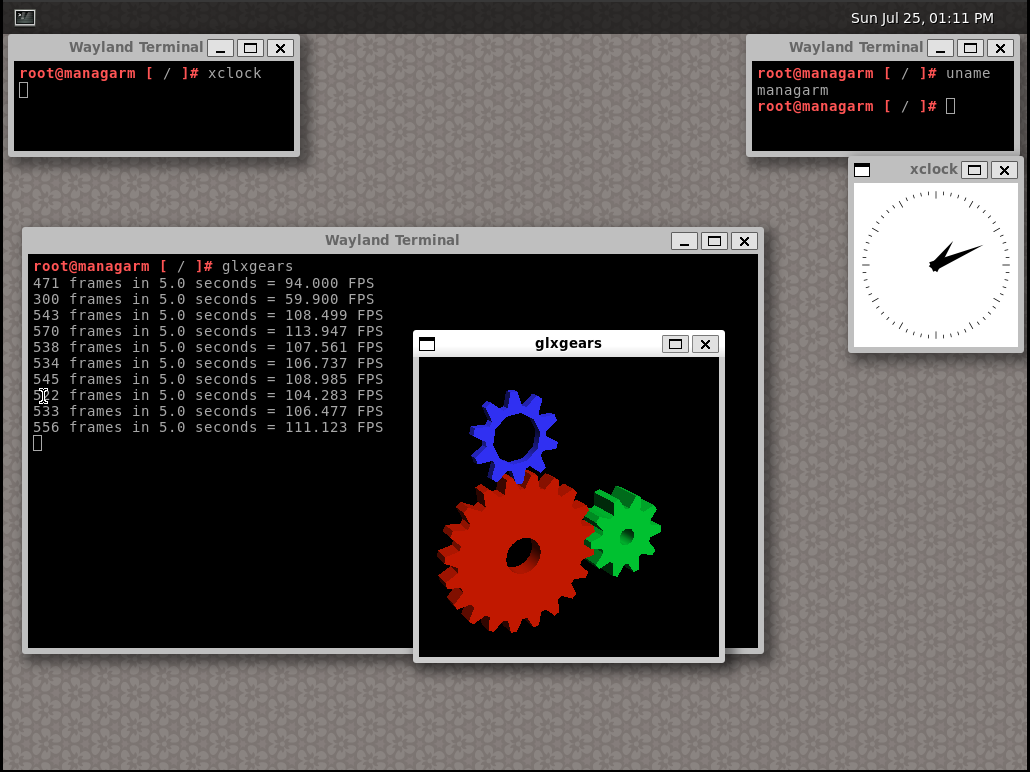

New Ports and Port Updates

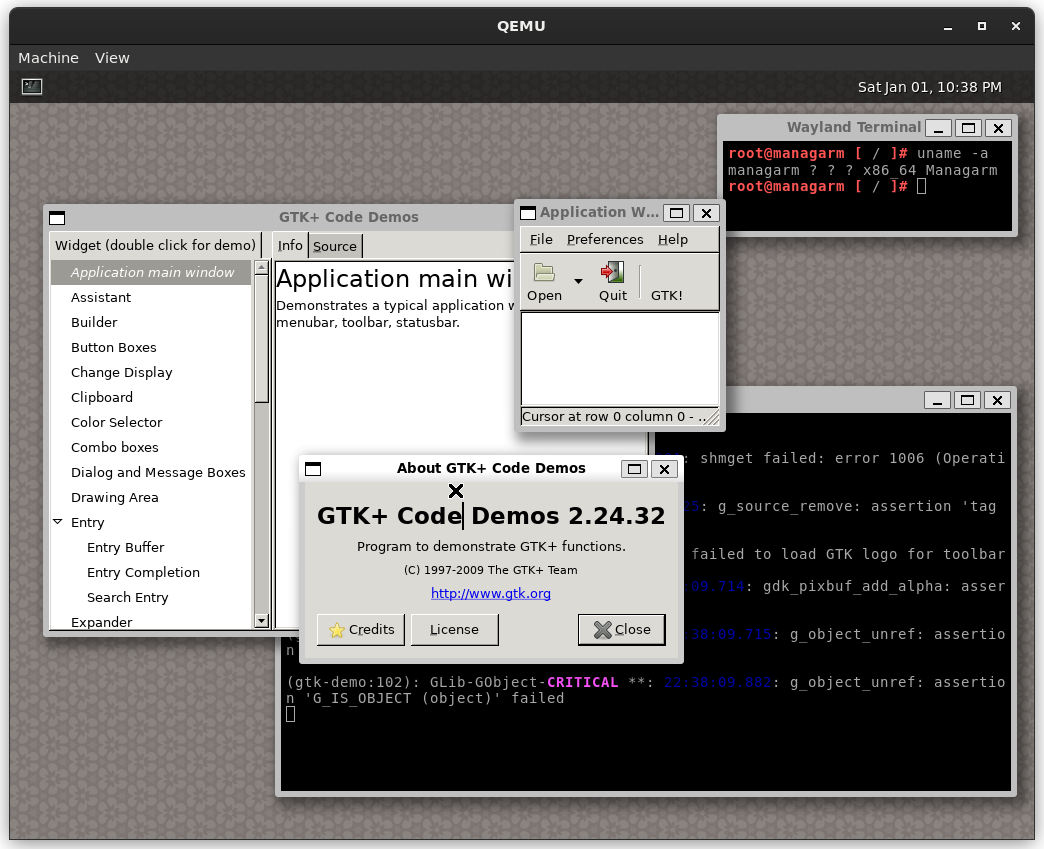

In the last two years, we received a lot of new and sometimes updated ports, our collection contains over 250 ports now! A lot of the ports are various nice-to-have things, such as common UNIX utilities like grep, sed, findutils and gawk, development tools like python, make and patch and we got enough of the X11 stack ported to run XWayland and several X based apps like xclock and gtklife. Another noteworthy thing to mention here is the addition of a new bootloader called limine, which we now use by default (although grub is still supported at this time and there are no plans to remove that support) and the addition of a stripped down util-linux port, which includes useful utilities like mount and losetup.

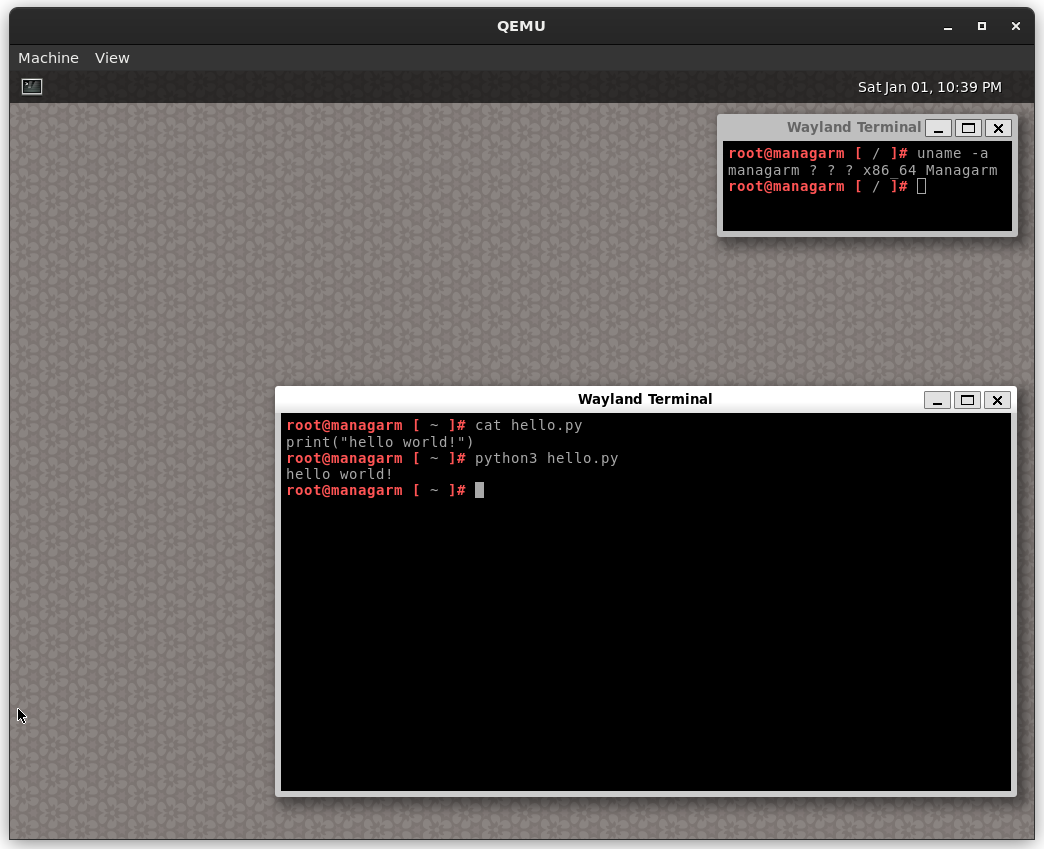

As a blog post without images would be boring, here are some screenshots, first off is Managarm running python.

After that, we have

After that, we have xclock.

And finally we have

And finally we have exa running.

The road to X11

The road to X11 was quite a bumpy one, with several issues that required digging deep into the X11 codebase. In the end, the biggest issues were a nasty epoll bug and the usage of abstract UNIX sockets, that were not implemented yet. With that fixed (and a small amount of stubbing of shared memory functions in mlibc) we were able to run the gtk-demo demo program successfully, paving the way for various other X based programs.

Outside of XWayland, work is ongoing to also run the classic X.Org server, while using its own DRM-based mode setting (instead of Weston’s).

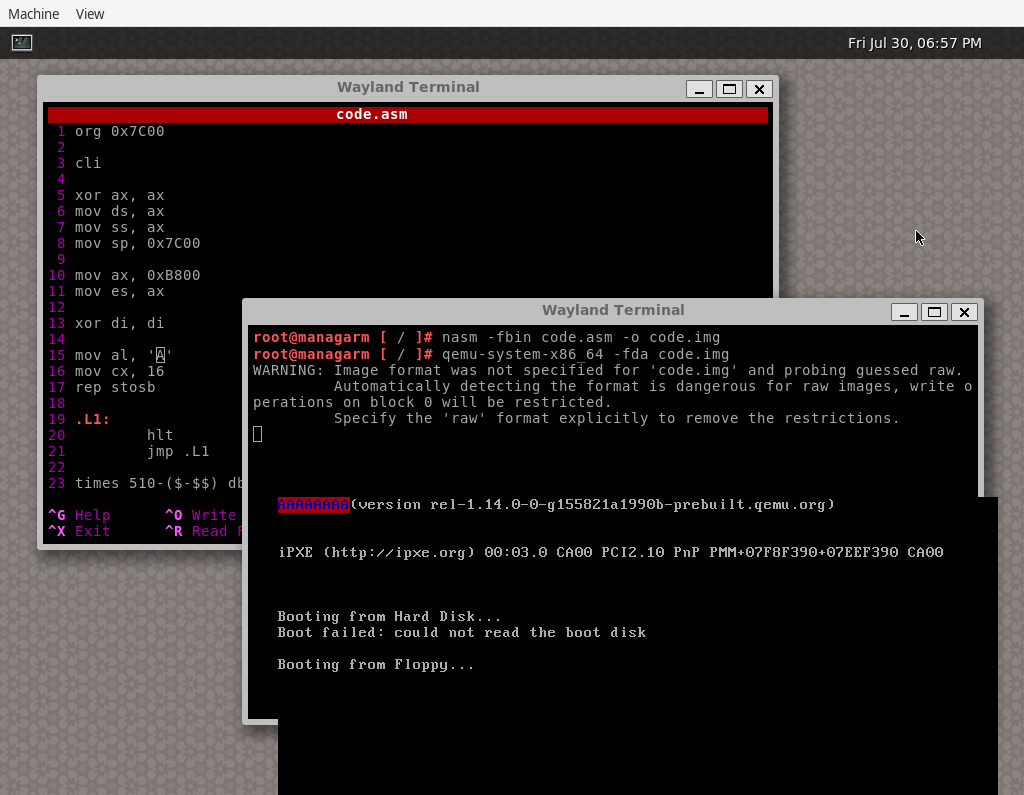

QEMU

Most of the pieces necessary for QEMU have already been in place, with the exception of sigaltstack and partial munmap/mmap/mprotect support. With both of these missing features implemented, we can run QEMU on Managarm, bringing us one step closer to being self-hosting.

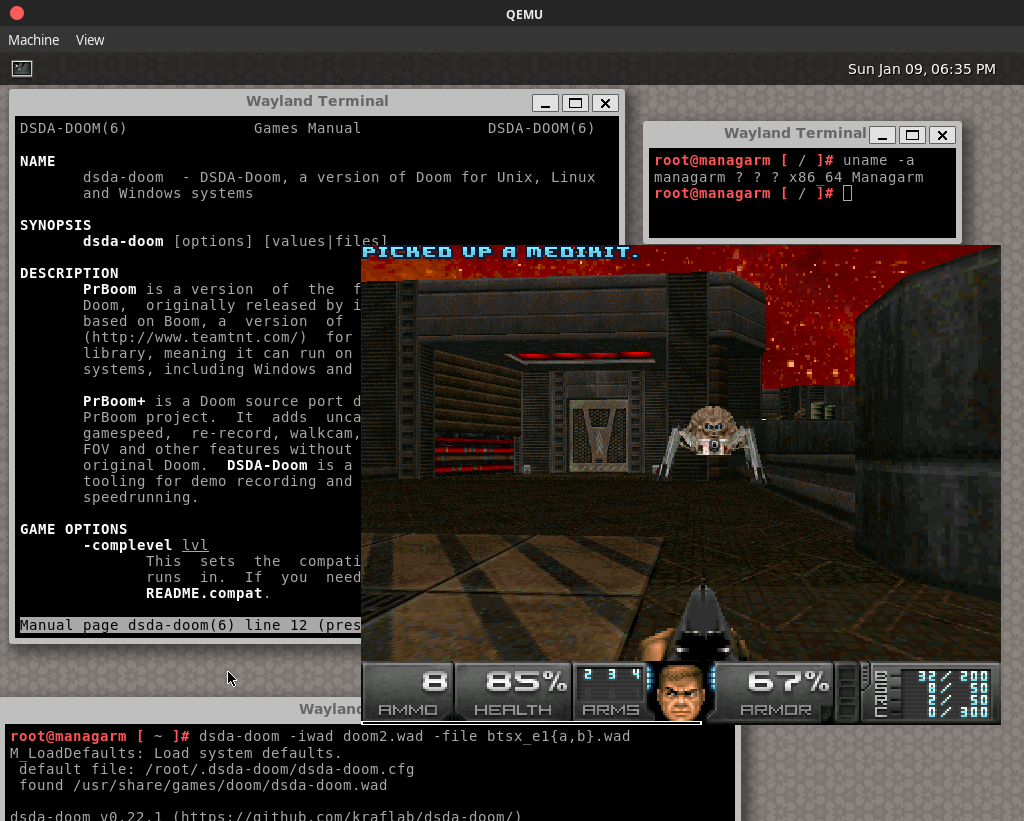

DOOM

Until recently, we did not have any DOOM port, mainly because we could not decide on which source port to use. We eventually decided upon dsda-doom, giving us a modern, yet vanilla DOOM experience, with extra speedrunning features as a bonus.

We also have several new and upcoming ports that we will likely show-case in a follow-up post.

What do we want to achieve in the next year?

Finish porting xbps. As mentioned above, considerable work went into porting the xbps package manager. While the general infrastructure, both inside Managarm and outside in terms of an repository, are set up, some more work is required to actually get xbps to function properly. We aim to implement the missing functionality soon.

Polish the port collection. Currently, we have a lot of ports that work at least partially, but some ports use pretty ugly hacks to get to that state. We should strive to get the quality of these ports up by improving functionality within mlibc and by removing hacks. This also includes adding better tests to check for correctness. We also plan to start upstreaming support patches such that we can remove some patches from the collection.

Complete TTY subsystem. We currently lack or incorrectly implement many TTY subsystem features (sessions and signals, process groups, et cetera), which are quite necessary for many kinds of programs as well as day-to-day life using the system. This goal is also accompanied by finishing up Unix process credentials and signals.

Some remaining goals from last time include porting more software, especially to self-host, improving the blockdev stack, making the system generally more stable and improving the netstack, especially the TCP implementation; and, of course, there is always more hardware to improve support for.

Help Wanted!

We are always looking for new contributors. If you want to get involved in the project, you can search our GitHub issues for interesting tasks. Aside from contributions to the kernel and/or POSIX layer, we would be particularly interested in network drivers and drivers for additional file systems (other than ext2), since Managarm currently lacks good drivers in these areas.

If you want to get in touch with the team, you can find us on Discord or on irc.libera.chat in the #managarm channel. Also, if you want to support Managarm, please consider donating to the project!

Acknowledgements

We thank all contributors to the Managarm, mlibc and related projects.